Coaching Letter #201

On Bayesian statistics and how that changes thinking about data

Hello, how are you, and thank you for subscribing to the Coaching Letter. This Coaching Letter is about what I have gleaned from the various books I’ve read this summer so far—well, some of them I started quite a long time ago, so I don’t want to give the impression that I’m a super fast reader. The theme is probably Bayesian inference, but I don’t want to tell you that in case you decide to go back to that detective novel you’ve been reading…

I think the most useful book I’ve read lately is How to Measure Anything, by Douglas Hubbard (which I mentioned briefly in this Coaching Letter). I actually had to read parts of it twice before I understood the major point; so here’s my restatement of what I think the point is. We’ve been taught in our graduate courses in measurement and statistics about standard deviations, randomized controlled trials, p-values, the null hypothesis, sample size, standard error of measurement, and so on. Early in my graduate school career I was in a counseling psychology program, and the textbook on psychometrics weighed at least 60lbs. I’ve seen smaller 1st graders. And I came away with the impression that measurement and assessment are highly technical endeavors not for the faint of heart. But this book offers a different perspective: we should think of measurement not as the pursuit of exactitude, but simply the reduction of uncertainty. This is super helpful. It strips away all the anxiety about meeting the criteria for reliability and validity and points out that you can learn a lot of really useful information just by asking a few people.

[Technical aside: what undergirds this book is a reliance on Bayesian statistics, which affords the constant updating of a hypothesis based on what is already known when new information becomes available, in contrast with what most of us were taught in graduate school, known as frequentist statistics. And we live in a Bayesian world, not a randomized controlled trial. Bayesian logic allows me to look at a school improvement plan and ask not only if this plan seems like it would work based on what it contains, but also whether similar plans have yielded their intended results in the past; and that knowledge will probably change the way I think about the latest plan. If you follow politics and especially polling closely, or listen to podcasts like Hidden Brain and 538 Politics, then you already know about the concept of priors, and how much we can learn about a topic based on how much we already know.]

What I really love about the book is the support it provides for the stance my colleagues and I have taken about a couple of things. First, think about data as information for decision making; we’ve used Dylan Wiliam’s adage that “People who espouse data-driven decision making tend to focus on the data. They collect data hoping that they may come in useful at some point in the future. Those who focus on decision-driven data collection decide what they want to do with the data before they collect the data, and collect only the data they need.” (If you want this as a slide, it’s currently no. 14 in this deck.) This seems to me a fairly adroit statement about what is wrong with data teams as they are typically constituted—Rydell and I wrote about this in Equitable School Improvement. Second, frequently the best way to reduce uncertainty is to act. We put so much emphasis on long planning processes and big launches, and we would be a lot better off if we took small steps and collected some data. Third, you don’t need a ton of data, and you don’t need a representative sample, you just need to figure out what information you need to decide what to try next.

Much of the work I’ve been involved with this past year has been about thinking about data and data teams differently. Some of that came from working on the data chapter in Equitable School Improvement (see this Coaching Letter for a summary of what’s in that chapter); some from working with several districts on changing the way that their data/department/grade level teams operate to be more like action research teams; and some from reading more about measurement and Bayesian statistics. Sometimes you need new language in order to be able to think differently. A huge breakthrough for me came when Rydell asked: “What if we stop talking about data teams and start talking about improvement teams?” In that moment, the work on data, the work on data teams, the shift from direct to indirect instructional leadership and the work on improvement science all came together in my head and I was able to see the nexus in a way that had not occurred to me before. The mission now is to revolutionize the way that data improvement teams are conceptualized, organized, and implemented, and to change concomitantly the data that are collected, the role of coaches, the role of leaders, and the flow of feedback. I now see improvement teams as one of the most under-leveraged structures available to districts in their work to improve instruction. Rydell, Tom, Andrew and I have some planning to do… watch this space.

There are a few related books that are also useful (not all from this summer!):

The Signal and the Noise, Nate Silver, is about predictions—economics, politics, weather forecasting, earthquakes—and why some methods are better than others. The book emphasizes the distinction between the "signal," which represents the useful information, and the "noise," which is the irrelevant or misleading data. Unsurprisingly, good prediction requires the ability to separate the signal from the noise, and the book explores how biases, flawed methodologies, and overconfidence can lead to poor predictions. Silver employs concepts from probability and statistics, such as Bayesian inference, to explain how predictions can be improved. The examples are really interesting and I really enjoyed the book.

Everything is Predictable, Tom Chivers, dives even deeper into the world of Bayesian thinking. This book is a useful companion to How to Measure Anything and The Signal and the Noise. It gives a lot more background and history than I knew before, and that made it a fun read.

The End of Average, Todd Rose, describes how we make mistakes when we try to condense multiple features of a phenomenon into a single number. And again, there is much here related to the work we do on various endeavors, in particular the three principles that Rose says should replace the way we typically think about average:

The jaggedness principle: Human characteristics cannot be reduced to a single number or an average. People are complex and multi-dimensional, and their abilities, traits, and skills vary widely.

The context principle: Behavior and performance are highly context-dependent. People's abilities and actions can change based on the environment and circumstances. I wrote about this in some detail in Coaching Letter #152,

The pathways principle: There is no one-size-fits-all path to success. Different people achieve their goals through various routes, and rigid, standardized systems often fail to recognize and accommodate this diversity.

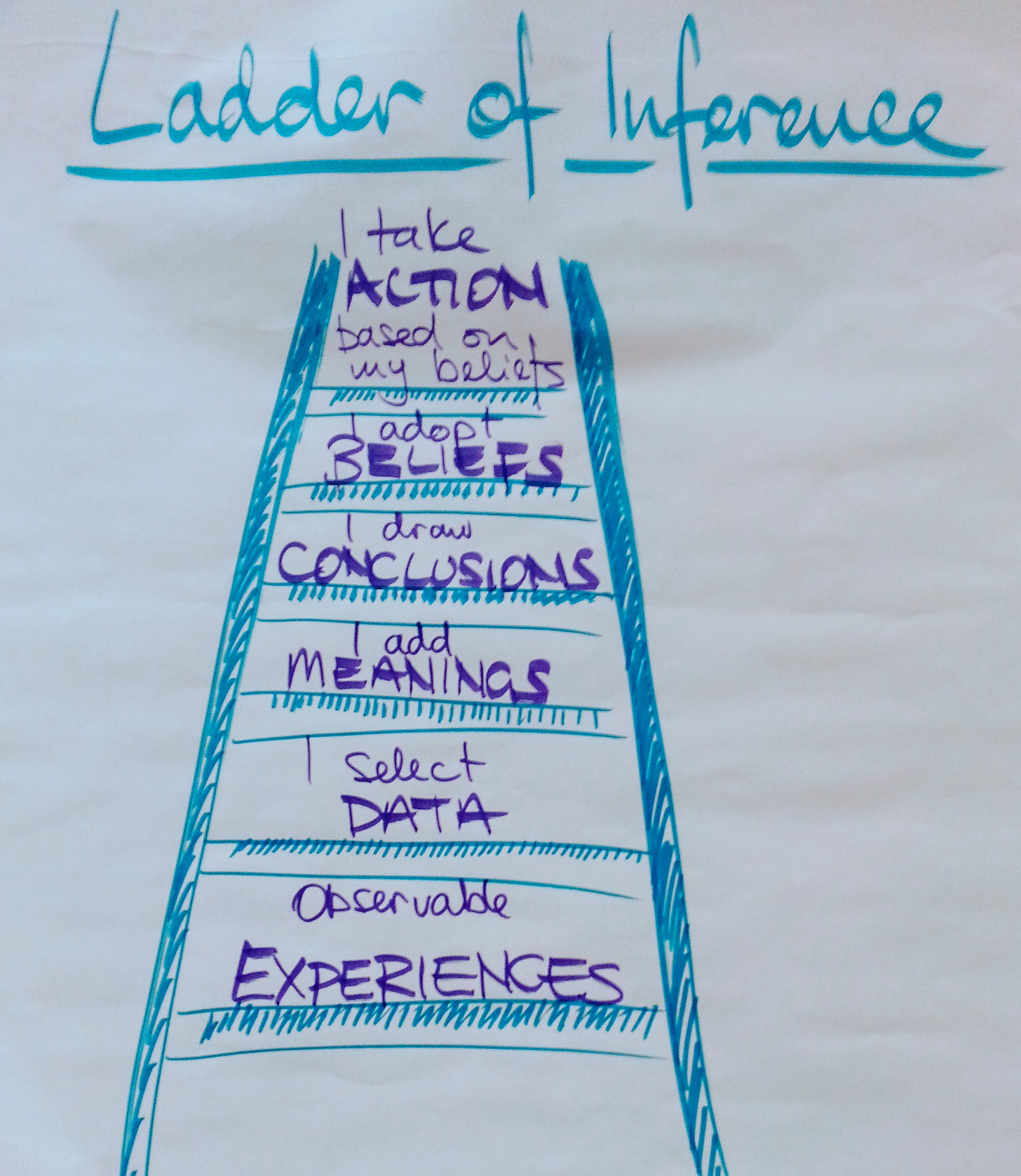

May Contain Lies, Alex Edmans, is yet another book about how easily we can be misled because of how we think about an issue. There was one really useful section, about how we think about issues as binary, when actually they are not, and those non-binary phenomena can be on a continuum, i.e. on a gradient from black to white, or they can be granular, a mixture of black and white, or both. For example, we talk about teachers as being ineffective or effective, but most teachers employ some mixture of less and more effective practices, and less effective teachers use a preponderance of ineffective practices, and highly effective teachers use mostly effective practices. Just as described in The End of Average, it’s possible to reduce the skill of any teacher to one number, but that doesn’t tell you much that’s useful, really. [Another technical aside. This book talks about the Ladder of Inference, which is a really important idea in the psychology of change. We use it all the time in our coaching training and our leadership development work. You can read more about it here, and a picture of it graces the top of this Coaching Letter. The original citation is Argyris, C. (1990). Overcoming Organizational Defenses: Facilitating Organizational Learning. But for some reason, May Contain Lies refers to the construct as the Ladder of Misinference, and that’s not the only place I’ve seen it mis-named lately. In the book Ask it’s called the Ladder of Understanding, and someplace else I saw it called the Ladder of Influence. No clue why anyone would take a really foundational idea and just decide to rename it. Amateurs.]

And finally, a reminder that many of the statistical tools used in social science have a long and racist history. We wrote about this in Equitable School Improvement in the all-important data chapter; and these books deal with the topic in some detail (I have mentioned most of them before):

Bernoulli's Fallacy: Statistical Illogic and the Crisis of Modern Science by Aubrey Clayton (2021).

Thicker than Blood: How Racial Statistics Lie by Tukufu Zuberi (2001).

White Logic, White Methods: Racism and Methodology edited by Tukufu Zuberi and Eduardo Bonilla-Silva (2008).

Superior: The Return of Race Science by Angela Saini (2019).

The Mismeasure of Man by Stephen Jay Gould (1981, revised and expanded edition in 1996).

All of these are great books and well worth reading.

If you want to chat about anything here, or if there is anything else I can do for you, please let me know! Best, Isobel