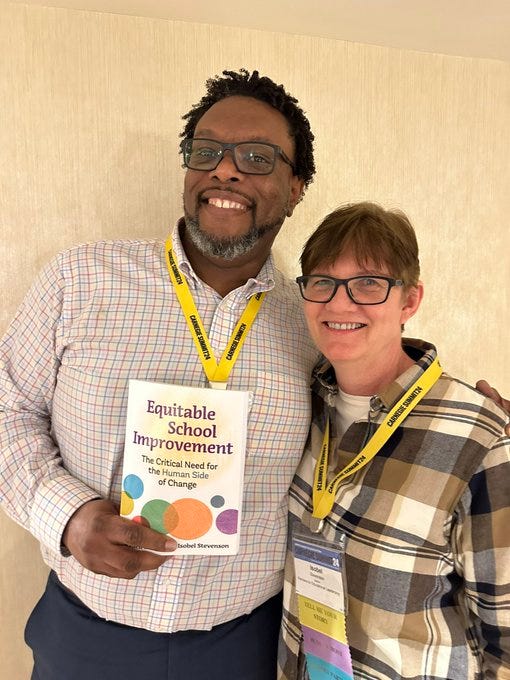

Friends, thank you for subscribing to the Coaching Letter. You rock. It has been a very busy few weeks. I just got back from the Carnegie Summit in San Diego, where I and my colleagues facilitated a one day workshop on Coaching for Continuous Improvement at the beginning of the Summit, and a Huddle at the end (which is improvement science speak for a quick conversation about where you want to be, where you are now, and how you are going to get from here to there), and attended several sessions and keynotes over the course of the two main days of the conference. It was awesome. It was so fabulous to spend time with such terrifically smart people who are doing the kind of work that I believe will really make a difference in improving schools for kids and learning so much from them. Also, Rydell and I got our hands on our new book for the first time, so that was fabulous.

So I really should write about the conference, or the book, or both, but this Coaching Letter is a short tribute to Professor Daniel Kahneman, who died on March 27. He was a psychologist who managed to get a Nobel Prize in Economic Sciences, for his work in a field that has become known as behavioral economics. His ideas and his writing have informed so much of what I think and write about, and incorporate into my own work, especially in coaching and continuous improvement. I looked to see how often I’ve written about his work, and he appears in six Coaching Letters, which is way up there with Amy Edmondson and Chris Argyris.

Kahneman and his colleague Amos Tversky were instrumental in changing the way that we think about rationality. We assume, ironically, that people are rational—that they make decisions that are logical, that is, in line with their best interests, and reliable, meaning that they would make the same decision no matter how the facts are presented. But despite the rational nature of this assumption of rationality, it turns out that humans are not rational, and that they are irrational in predictable ways. In other words, not only do we err, we err systematically. Kahneman and Tversky were the researchers who, beginning with the publication of “Belief in the Law of Small Numbers” in 1971, drew attention to the mistakes that we make in logic and decision making, which are now commonly known as cognitive biases. Here are some of the most well-known biases:

Anchoring Bias: The tendency to rely too heavily on the first piece of information encountered (the "anchor") when making decisions, even if the anchor is irrelevant or unreliable.

Availability Heuristic: The tendency to overestimate the likelihood of events based on how easily they come to mind. This can lead to the overestimation of rare or dramatic events and the underestimation of more common occurrences.

Representativeness Heuristic: The tendency to judge the likelihood of an event based on how closely it resembles a prototype or stereotype. This can lead to errors in judgment when the prototype is not a reliable indicator of the actual probability.

Confirmation Bias: The tendency to seek out, interpret, or remember information in a way that confirms one's preexisting beliefs or hypotheses, while disregarding or downplaying contradictory evidence.

Overconfidence Bias: The tendency to overestimate one's own abilities, knowledge, or judgment. This can lead individuals to take excessive risks or make overly optimistic predictions about future outcomes.

Loss Aversion: The tendency to prefer avoiding losses over acquiring equivalent gains. People often place greater emphasis on preventing losses than on achieving gains, leading to risk-averse behavior.

Hindsight Bias: The tendency to perceive events as having been more predictable after they have occurred. People tend to overestimate their ability to have foreseen the outcome, overlooking the uncertainty and complexity of the situation.

Framing Effect: The tendency for people's decisions to be influenced by how information is presented or framed. The same information presented in different ways can lead to different decisions or judgments.

Endowment Effect: The tendency for people to value objects or investments more highly simply because they own them. This can lead to reluctance to sell possessions or irrational attachment to investments.

Sunk Cost Fallacy: The tendency to continue investing time, money, or effort into a decision or project even when it no longer seems worthwhile, simply because of the investment already made.

There are, in fact, hundreds of named biases now—I keep the Cognitive Bias Codex handy.

In Thinking, Fast and Slow, Kahneman frames these biases in terms of System 1 and System 2, which are terms employed by Kahneman but not coined by him—they come from the work of Keith Stanovich and Richard West in this article, which also contains this great description of how our thinking process can fail us:

A substantial research literature – one comprising literally hundreds of empirical studies conducted over nearly three decades – has firmly established that people’s responses often deviate from the performance considered normative on many reasoning tasks. For example, people assess probabilities incorrectly, they display confirmation bias, they test hypotheses inefficiently, they violate the axioms of utility theory, they do not properly calibrate degrees of belief, they overproject their own opinions on others, they allow prior knowledge to become implicated in deductive reasoning,and they display numerous other information processing biases.

Stanovich and West suggest, and clearly Kahneman agreed, that a lot of our biases can be theorized by thinking about the brain as undertaking two kinds of processing at once. System 1 thinking is intuitive, automatic, and quick, relying on heuristics and biases. It operates effortlessly and unconsciously, making snap judgments based on patterns and past experiences. In contrast, System 2 thinking is deliberate, analytical, and slow. It involves conscious reasoning, critical thinking, and effortful cognitive processes. We avoid it because it’s harder, takes longer, and tires us out.

We could not function without System 1. It’s impossible to pay attention to all available information, it’s impossible to think everything through from the beginning, it’s impossible to evaluate all possibilities before taking action. The speed with which System 1 allows us to navigate the world makes it completely invaluable. At the same time, reliance on our overly-confident yet limited view of the world means that we frequently take the top-of-mind choice rather than the less obvious but possibly better one. Reliance on mental models also frequently entails reliance on stereotypes, which can lead to racial, gender, and other biases to infiltrate and influence our decisions and our behavior.

As someone who works in equity, strategy, and coaching, knowing about System 1 and System 2 is invaluable. People who have seen me coach will have seen the kind of questions that I ask in order to push back on the assumptions that System 1 is so happy for someone to make. Here are some of my favorite questions designed to get people past their System 1 and engage their System 2—adapted from a list in Coaching Letter #165:

Do you know that for sure?

What else would explain what’s going on?

Tell me again how you think those are connected.

What else could you do? (sometimes asked three or four times…)

How confident are you about that?

What data do you have?

What data could you collect?

Walk me through your logic.

Have you asked anyone about it?

What do you think the other person would say?

If it doesn’t work, why will that be?

How much would you bet that it will work?

What would you do if you couldn’t do what you just described?

How will you know that a change is an improvement?

So here are some ways to get more familiar with Professor Kahneman’s work.

Read Thinking, Fast and Slow, which is the book that gave him public recognition. I’ve listened to it two or three times, and a hard copy is almost always within reach. (I checked to see whether that is true, and indeed, there is a copy on the rolling cart that holds the books that I’m currently using the most right behind my chair. The link is to the hardcover on Amazon, but you can also get it as an ebook and an audio book.)

Read Michael Lewis’s book about Professor Kahneman’s long professional relationship with Amos Tversky, which is a really great story on several levels, including not only the findings of their studies but also their lives. It’s called The Undoing Project and I highly recommend it. (The link is to the hardcover on Amazon, but you can also get it as an ebook and an audio book.) This Freakonomics episode is Michael Lewis talking about the book.

Listen to him being interviewed on The Knowledge Project podcast. This is worth listening to because it will take you to ideas beyond what’s in Thinking, Fast and Slow.

Professor Kahneman did a talk at Google not long after Thinking, Fast and Slow came out. It’s about an hour but again, well worth listening to/watching.

Listen to him being interviewed on the People I (Mostly) Admire podcast, which is a podcast I mostly like. This was after another of his books came out, Noise, which I only listened to part of. It is not, ironically, terribly succinct, so I can’t recommend that.

Read Richard Thaler’s book, Misbehaving. Professor Thaler also won the Nobel Prize in Economic Sciences, and his work overlaps a great deal with Kahneman’s and Tversky’s. Plus this book is more entertaining. (The link is to the hardcover on Amazon, but you can also get it as an ebook and an audio book.)

Finally, just some links to upcoming workshops (All the BTC events are full, sorry!):

Virtual Book Study: Systems for Instructional Improvement - first session 3:15-4:30pm Eastern,April 30, 2024—free and online! Please share!

My friend and co-author Jennie Weiner will be speaking at UConn Hartford on May 13, 5-7 pm, about her new book Education Lead(her)ship—hope to see you there!

Book Launch Party: Equitable School Improvement - May 30, 2024. Woo hoo! See the picture at the top of this post!

As always, please let me know if there is anything I can do for you. Best, Isobel